One of the interesting sidelines to come out of the remarkably interesting leaked NYT innovation report in the last few days has been the fact that traffic to the NYT homepage has halved in two years. It’s an intriguing statistic, and more than one media outlet has taken it and run with it to create a beguiling narrative about how the homepage is dead, or at the very least dying, why, and what this means for news organisations.

But what’s true for the NYT is certainly not true for the whole of the rest of the industry. Other pages – articles and tag pages – are certainly becoming more important for news organisations, but that doesn’t mean the homepage no longer matters – or that losing traffic to it is a normal and accepted shift in this new digital age. Losing traffic proportionately makes sense, but real-terms traffic loss looks rather unusual.

Audience stats like this are usually closely guarded secrets, because of their commercial sensitivity, but it’s fair to suggest that homepage traffic (at least, to traditionally organised news homepages) is a reasonable indicator of brand loyalty, of interest in what that organisation has to say, and of trust that organisation can provide an interesting take on the day. Bookmarking the homepage or setting it as a start point for an internet journey is an even bigger mark of faith, a suggestion that one site will tell you what’s most important at any given moment when you log in – but it’s very hard even for sites themselves to measure bookmark stats, never mind to get some sort of broad competitor data that would shed light on whether that behaviour is declining.

It’s plausible, therefore, that brand search would be a rough indicator of brand loyalty and therefore of homepage interest; the New York Times is declining there, while the Daily Mail, for example, has been rocketing to new highs recently. I would be incredibly surprised if the Mail shares this pessimism about the health of the homepage, based on its own numbers. (That’s harder to measure for The Atlantic, whose marine namesake muddies the search comparison somewhat.)

The death of the homepage, much like the practice of SEO and pageviews as a metric, has been greatly exaggerated. What’s happening here, as Martin Belam points out, is more complicated than that. As the internet is ageing, the older, standard ways of doing business and distributing content are changing, and are being joined by newer models and methods. Joined, not supplanted, unless of course you’ve created your new shiny thing purely to focus on the new stuff rather than the old stuff, the way Buzzfeed focuses on social and Quartz doesn’t have any real homepage at all.

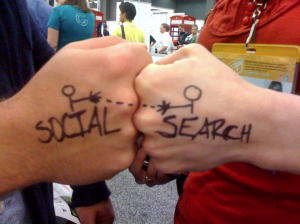

You need to be thinking about SEO and social, pageviews and engagement metrics, the homepage and the article page. Older techniques don’t die just because we’ve all spotted something newer and sexier, unless the older thing stopped serving a genuine need; the resurgence of email is proof enough of that. Diversify your approach. Beware of zombies.