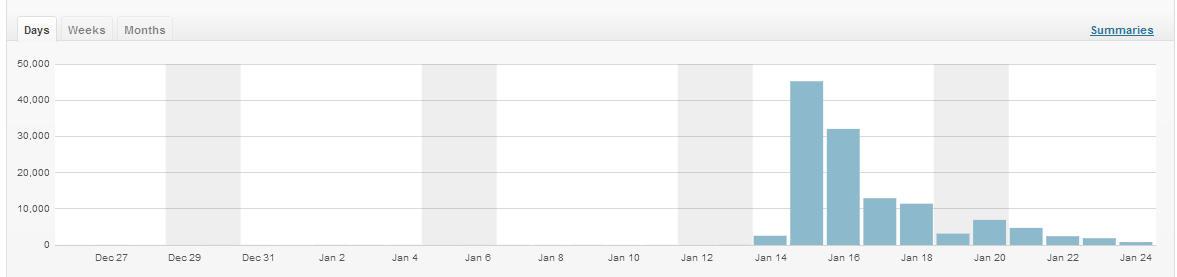

Last week, my other half wrote a rather amusing blog post about the Panasonic Toughpad press conference he went to in Munich. He published on Monday afternoon, and by the time he went out on Monday evening the post had had just over 600 views. I texted him to tell him when it passed 800, making it the best single day in his blog’s sporadic, year-long history.

Next day it hit 45,000 views, and broke our web hosting. Over 72 hours it got more than 100,000 views, garnered 120 comments, was syndicated on Gizmodo and brought Grant about 400 more followers on Twitter. Here’s what I learned.

1. Site speed matters

The biggest limit we faced during the real spike was CPU usage. We’re on Evohosting, which uses shared servers and allots a certain amount of usage per account. With about 180-210 concurrent visitors and 60-70 page views a minute, according to Google Analytics real-time stats, the site had slowed to a crawl and was taking about 20 seconds to respond.

WordPress is a great CMS, but it’s resource-heavy. Aside from single-serving static HTML sites, I was running Look Robot, this blog, Zombie LARP, and, when I checked, five other WordPress installations that were either test sites or dormant projects from the past and/or future. Some of them had caching on, some didn’t; Grant’s blog was one of the ones that didn’t.

So I fixed that. Excruciatingly slowly, of course, because everything took at least 20 seconds to load. Deleting five WordPress sites, deactivating about 15 or 20 non-essential plugins, and installing WP Super Cache sped things up to a load time between 7 and 10 seconds – still not ideal, but much better. The number of concurrent visitors on site jumped up to 350-400, at 120-140 page views a minute – no new incoming links, just more people bothering to wait until the site finished loading.

2. Do your site maintenance before the massive traffic spike happens, not during

Should be obvious, really.

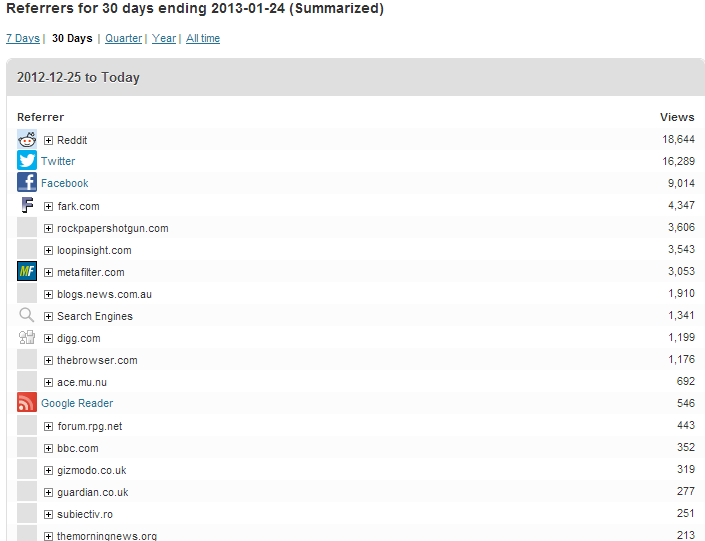

3. Things go viral in lots of places at once

Grant’s post started out on Twitter, but spread pretty quickly to Facebook off the back of people’s tweets. From there it went to Hacker News (where it didn’t do well), then Metafilter (where it did), then Reddit, then Fark, at the same time as sprouting lots of smaller referrers, mostly tech aggregators and forums. The big spike of traffic hit when it was doing well from Metafilter, Fark and Reddit simultaneously. Interestingly, the Fark spike seemed to have the longest half-life, with Metafilter traffic dropping off more quickly and Reddit more quickly still.

4. It’s easy to focus on activity you can see, and miss activity you can’t

Initially we were watching Twitter pretty closely, because we could see Grant’s tweet going viral. Being able to leave a tab open with a live search for a link meant we could watch the spread from person to person. Tweeters with large follower counts tended to be more likely to repost the link rather than retweeting, and often did so without attribution, making it hard to work out how and where they’d come across it. But it was possible to track back individual tweets based on the referrer string, thanks to the t.co URL wrapper. From some quick and dirty maths, it looks to me like the more followers you have, the smaller the click-through rate on your tweets – but the greater the likelihood of retweets, for obvious reasons.

Around midday, Facebook overtook Twitter as a direct referrer. We’d not been looking at Facebook at all. Compared to Twitter and Reddit, Facebook is a bit of a black box when it comes to analytics. Tonnes of traffic is coming, but who from? I still haven’t been able to find out.

5. The more popular an article is, the higher the bounce rate

This doesn’t *always* hold true. However, I can’t personally think of a time when I’ve witnessed it being falsified. Reddit in particular is also a very high bounce referrer, due to its nature, and news as a category tends to see very high bounce especially from article pages, but it does seem to hold true that the more popular something is the more likely people are to leave without reading further. Look, Robot’s bounce rate went from about 58% across the site to 94% overall in 24 hours.

My feeling is that this is down to the ways people come across links. Directed searching for information is one way: that’s fairly high-bounce, because a reader hits your site and either finds what they’re looking for or doesn’t. Second clicks are tricky to get. Then there’s social traffic, where a click tends to come in the form of a diversion from an existing path: people are reading Twitter, or Facebook, or Metafilter, they click to see what people are talking about, then they go straight back to what they were doing. Getting people to break that path and browse your site instead – distracting them, in effect – is a very, very difficult thing to do.

6. Fark leaves a shadow

Fark’s an odd one – not a site that features frequently in roundups of traffic drivers, but it can still be a big referrer to unusual, funny or plain daft content. It works like a sort of edited Reddit – registered users submit links, and editors decide what goes on the front page. Paying subscribers to the site can see everything that’s submitted, not just the edited front. I realised before it happened that Grant was about to get a link from their Geek front, when the referrer total.fark.com/greenlit started to show up in incoming traffic – that URL, behind a paywall, is the place where links that have been OKed are queued to go on the fronts.

7. The front page of Digg is a sparsely populated place these days

I know that Grant’s post sat on the front page of Digg for at least eight hours. In total, it got just over 1,000 referrals. By contrast, the post didn’t make it to the front page of Reddit, but racked up more than 20,000 hits mostly from r/technology.

8. Forums are everywhere

I am always astonished at the vast plethora of niche-interest forums on the internet, and the amount of traffic they get. Much like email, they’re not particularly sexy – no one is going to write excitable screeds about how forums are the next Twitter or how exciting phpBB technology is – but millions of people use them every day. They’re not often classified as ‘social’ referrers by analytics tools, despite their nature, because identifying what’s a forum and what’s not is a pretty tricky task. But they’re everywhere, and while most only have a few users, in aggregate they work to drive a surprising amount of traffic.

Grant’s post got picked up on forums on Bad Science, RPG.net, Something Awful, the Motley Fool, a Habbo forum, Quarter to Three, XKCD and a double handful of more obscure and fascinating places. As with most long tail phenomena, each one individually isn’t a huge referrer, but the collection gets to be surprisingly big.

9. Timing is everything…

It’s hard to say what would have happened if that piece had gone up this week instead, but I don’t think it would have had the traffic it has. Grant’s post hit a chord – the ludicrous nature of tech events – and tapped into post-CES ennui and the utter daftness that was the Qualcomm keynote this year.

10. …but anything can go viral

Last year I was on a games journalism panel at the Guardian, and I suggested that it was a good idea for aspiring journalists to write on their own sites as though they were already writing for the people they wanted to be their audience. I said something along the lines of: you never know who’s going to pick it up. You never know how far something you put online is going to travel. You never know: one thing you write might take off and put you under the noses of the people you want to give you a job. It’s terrifying, because anything you write could explode – and it’s hugely exciting, too.

Yeah, WordPress is great, but if you’re expecting any volume of traffic at all, you need a cacheing layer. Whenever I set up a new WP blog, I routinely add local and CDN-based cacheing, just to be safe.

And people have been under-estimating forums for years. For the web-centric press’s rampant neophilia, new online things very rarely kill off old online things – just drive them into niches.

Aye. I’d gotten used to not getting much traffic at all, so I’d gotten rather lazy about caching. I’ve learned my lesson now, I hope.

Good post – mirrors a lot of what we’ve seen recently with our own traffic. +1 on needing a caching layer. We use Fastly – and it has proved to be cheap & very good at doing WP content. Highly recommended.